Agentforce and the Limits of CRM-Era Intelligence

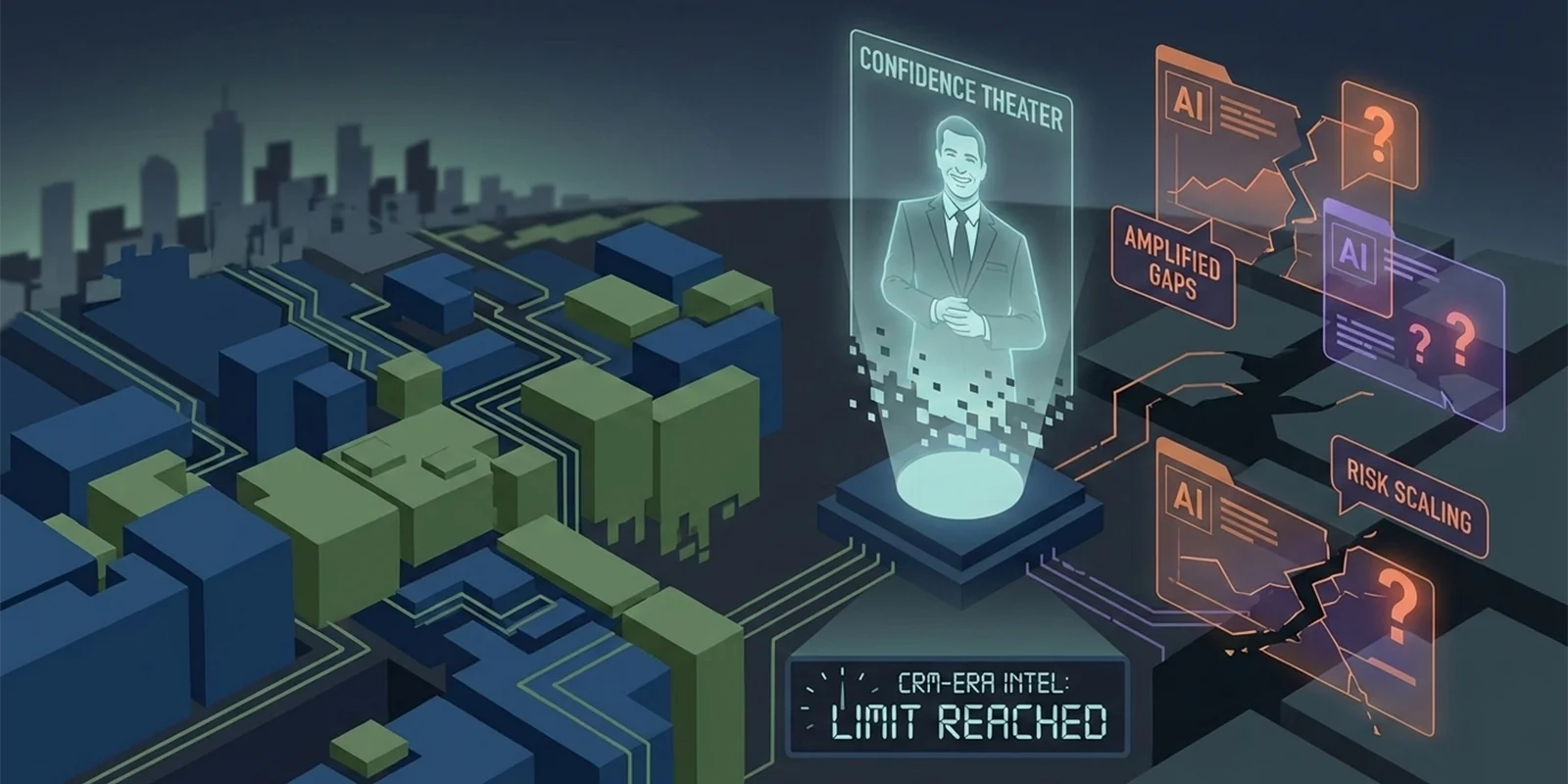

Agentforce doesn’t make Salesforce intelligent—it industrializes its blind spots. It takes the limitations baked into Salesforce’s core and scales them with confidence. Salesforce was built to model reporting behavior, not real execution, and AI cannot be layered on top of that mismatch. The more intelligence Salesforce adds, the more its abstraction shows through. What looks like progress is actually amplification.

Salesforce still positions itself as the foundation of modern sales, with Agentforce framed as the AI layer that finally turns data into insight. But AI doesn’t create understanding on its own. It multiplies whatever structure exists beneath it. When the underlying system only captures what was logged after the work, intelligence compounds the gaps just as efficiently as it compounds the efficiencies.

That design made sense in a world where selling was slow, linear, and desk-bound. Reps executed first, then documented calls, notes, and activities so leadership could review performance later. That model breaks down the moment execution happens in the real world. In field sales and CPG environments, the work is the data. Shelf conditions, competitive pressure, compliance gaps, and execution quality are not summaries—they are the reality. Salesforce captures what remains once that reality has been flattened into generic objects.

Agentforce is trained on that flattened view. When an AI system reasons over visits, tasks, and free-text notes, it isn’t reasoning about what actually happened—it’s reasoning about how it was reported. That isn’t intelligence. It’s automation with confidence. And confidence without context scales risk faster than humans ever could.

This is the failure legacy CRM platforms avoid naming. Salesforce optimizes for visibility, hygiene, and comfort. It answers the questions executives like to ask—Is the data filled in? Is the pipeline visible? Are reps compliant?—while avoiding the harder ones that actually drive performance: Where is execution breaking right now? What risks look fine on dashboards but fail in the field? What decisions are being made without context? Once reality is compressed for reporting, those answers disappear.

Judgment cannot be retrofitted after the fact. It lives in constraints, trade-offs, and alternatives that could have happened but didn’t. Salesforce was never designed to capture that structure, so Agentforce ends up narrating decisions rather than supporting them. The result is confidence theater—systems that sound intelligent while quietly compounding operational risk.

The future isn’t a smarter CRM layered with AI. It’s systems built around execution itself, where data is created as a byproduct of work instead of reconstructed later for reporting. Platforms that model reality correctly don’t need AI to guess what matters. Intelligence compounds naturally because the foundation is sound. Salesforce isn’t falling behind because it failed; it’s being stretched beyond what it was ever designed to do.